Ever found an amazing website and thought, “I need to save this before it’s gone”?

Learning how to download a website to run locally on mac is easier than you think. And I’m going to show you exactly how to do it.

I’m a web developer. I’ve been downloading websites for over six years now. Sometimes it’s for client projects. Other times, it’s personal saving tutorials, articles, or sites I just don’t want to lose.

Here’s what nobody tells you: websites vanish constantly. That cooking blog you love? Could disappear tomorrow. That coding tutorial? Gone next week. Companies shut down. People abandon projects. Content gets deleted.

Maybe you’re heading on a long flight. Airport WiFi is garbage. Airplane internet? Even worse and expensive.

Or you’re studying web design. You want to see how the pros build their sites. Check out the actual code. Learn from real examples.

Whatever your reason, I’ve got you covered.

In this guide I will explain four methods. Each one tested on my own MacBook. They all work whether you’re running Monterey, Ventura, or Sonoma.

We’ll start simple. Then level up if you want more control. By the end, you’ll have websites saved on your computer. Browse them offline. Anytime you want. Zero internet needed.

Why You’d Want to Do This in the First Place

Before we jump into the actual steps, let me tell you why this is such a useful skill to have.

You’re Building Websites

If you’re learning web development or already building sites, having local copies is a game-changer. You can peek under the hood of professionally-built websites and see exactly how they work. No more guessing. You can see the actual code, the file structure, everything.

I learned so much this way when I was starting out. It’s like having a backstage pass to see how the pros do it.

You Need Stuff Offline

Going somewhere without reliable internet? Download the sites you need beforehand. I do this before every long flight. Tutorial sites, documentation, even entire blogs – all available without connection.

Beats staring at the seat in front of you for six hours, right?

You’re Archiving Something Important

Websites vanish all the time. Seriously, it happens more than you’d think. Companies shut down, people abandon their blogs, content gets taken down.

If there’s something you really value, grab a copy while you can. I’ve been burned by this before – went back to find an article I loved, and poof, the whole site was gone.

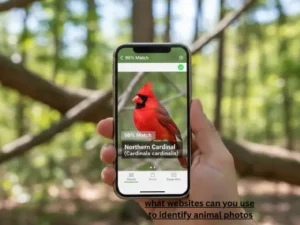

You Want to Study Real Examples

There’s no better way to learn than by studying sites you admire. Want to know how that cool animation works? How they structured their navigation? Download it and find out.

It’s like having a textbook that shows you real-world examples instead of made-up ones.

Why Download a Website to Run Locally on Mac?

Don’t worry, you probably have most of this already.

You need a Mac (obviously). Any recent version of macOS works fine. I’m on Sonoma right now, but this stuff works on older versions too.

Make sure you have some free storage space. How much depends on the website size. A simple blog? Maybe 50-100MB. A huge site with tons of images and videos? Could be several gigabytes.

You’ll need internet to download the site initially. Kind of a no-brainer, but worth mentioning.

That’s basically it. For one of the methods, you’ll use the terminal, but I’ll guide you through every single step. Even if you’ve never touched it before.

Hold Up – Is This Even Legal?

Okay, let’s talk about the elephant in the room.

Yes, downloading websites for personal use is totally fine. You’re not breaking any laws by saving content for yourself to look at offline.

But here’s where it gets tricky.

You can’t just download a site and then republish it as your own. That’s copyright infringement, plain and simple. Don’t do it.

You also can’t sell downloaded content or use it commercially without permission. That’s a fast track to legal trouble.

Some websites explicitly say “don’t copy our stuff” in their terms of service. Respect that. If a site doesn’t want to be downloaded, don’t download it.

Think of it like this – recording a TV show to watch later? Perfectly fine. Recording it and then selling DVDs? That’s illegal.

Use common sense and respect people’s work. Download for learning, reference, or personal backup, and you’re golden.

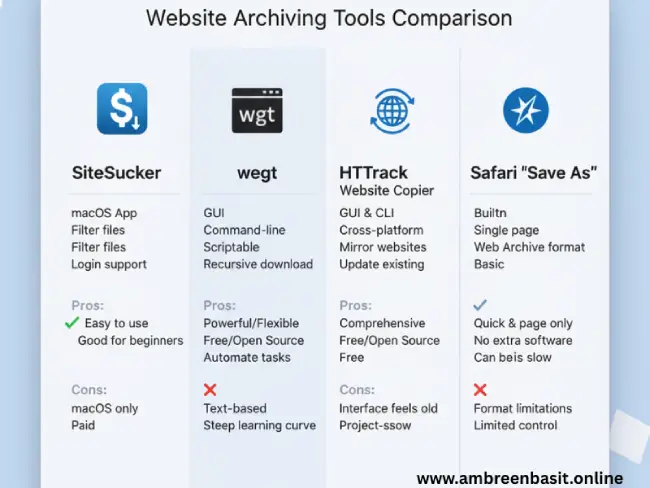

Method 1: SiteSucker (The Easy Way)

This is where I tell beginners to start. SiteSucker makes the whole process ridiculously simple.

It’s a Mac app that downloads entire websites automatically. You just paste a URL and hit go. That’s it.

Getting SiteSucker Set Up

Head to the official SiteSucker website and download it. There’s a free version that works great for most people. They have a paid version with extra features, but honestly, the free one does everything you need.

Once it’s downloaded, drag it into your Applications folder like any other Mac app.

Using It to Download a Site

Open SiteSucker. The interface is clean and simple no million buttons or confusing options.

You’ll see a URL field at the top. Paste in the website address you want to download. For example, if you want to download example.com, just paste https://example.com in there.

Tweaking the Settings (Optional But Smart)

Before you hit download, click the little gear icon to adjust settings.

Here’s what I usually do:

I set a download limit under Settings > Limits. This is super important if you’re downloading a big site. You don’t want to accidentally download 50GB when you only wanted the main pages.

Check what file types you’re grabbing. By default, SiteSucker downloads everything – HTML, images, CSS, JavaScript, the works. That’s usually what you want, but you can exclude stuff if needed.

Let It Rip

Hit Enter or click the Download button.

Now SiteSucker goes to work. You’ll see files downloading in real-time. It’s actually pretty satisfying to watch.

How long this takes depends entirely on the site size. A small blog? Maybe five minutes. A huge site with thousands of pages? Grab a coffee and come back later.

Finding Your Downloaded Site

When it’s done, SiteSucker saves everything in a folder on your Mac. Usually in Downloads or Documents.

Open that folder and look around. You’ll see it organized exactly like the original website – folders for images, stylesheets, scripts, all that good stuff.

Find the file called index.html and double-click it. Your browser opens up and boom – there’s the website, running locally on your Mac. No internet needed.

The Good and Not-So-Good

SiteSucker is awesome for most basic websites. Blogs, portfolios, documentation sites – it handles them beautifully.

But it does have limits. Really modern sites that rely heavily on JavaScript can be tricky. Web apps with lots of interactive features might not work perfectly offline.

Still, for probably 80% of what you’ll want to download, SiteSucker is perfect. And you can’t beat how easy it is to use.

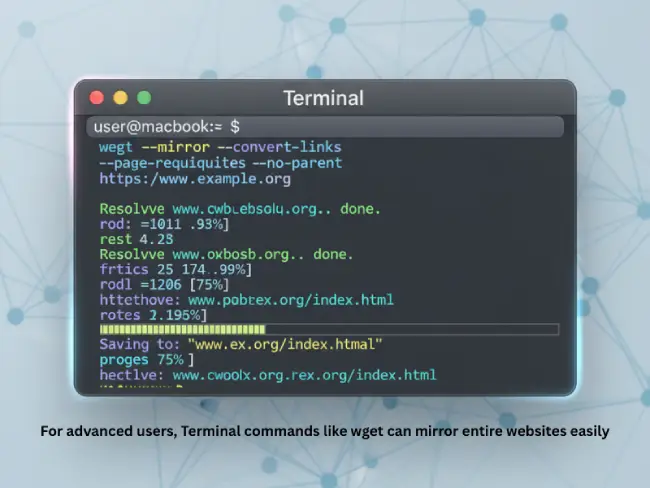

Method 2: wget Through Terminal (For When You Want More Control)

Alright, this one’s a bit more advanced. But stick with me it’s not as scary as it sounds.

Wget is a command-line tool that’s been around forever. It’s super powerful and gives you way more control than any GUI app.

Getting Homebrew First

Before we can use wget, you need Homebrew. It’s basically an app store for command-line tools.

Open Terminal. You can find it by hitting Command + Space, typing “Terminal”, and pressing Enter.

Copy and paste this command:

/bin/bash -c "$(curl -fsSL https://raw.githubusercontent.com/Homebrew/install/HEAD/install.sh)"Hit Enter and follow what it tells you to do. It takes a few minutes to install. Don’t panic if you see a bunch of text scrolling by – that’s normal.

Installing wget

Once Homebrew is ready, type this:

brew install wgetPress Enter and wait. Pretty quick and painless.

The Magic Command

Here’s where it gets interesting. This one command does everything:

wget --mirror --convert-links --adjust-extension --page-requisites --no-parent https://example.comI know, it looks like gibberish. Let me break down what each part actually does:

--mirror tells wget to download the entire site, following all the links

--convert-links changes the links so they work on your computer instead of pointing back to the internet

--adjust-extension makes sure files have the right extensions like .html

--page-requisites grabs all the images, CSS files, and other stuff the page needs to look right

--no-parent keeps wget from wandering off and downloading stuff from parent directories

Just replace https://example.com with whatever site you want to download.

Watching It Work

Hit Enter and wget starts downloading. You’ll see each file as it’s grabbed. It’s not the prettiest interface, but you can see exactly what’s happening.

For big sites, this might take a while. Go do something else and check back later.

Where’d Everything Go?

Wget creates a folder named after the website’s domain. So if you downloaded example.com, look for a folder called “example.com” in your home directory.

Open that folder, find index.html, double-click, and you’re looking at your locally downloaded site.

Why I Love wget

It works on basically any website. It’s free. It’s incredibly customizable if you want to get fancy with it.

The downside? You need to be comfortable with the terminal. And yeah, those commands look intimidating at first.

But once you get the hang of it, wget becomes your best friend. I use it all the time for larger, more complex downloads.

Method 3: HTTrack (The Middle Ground)

HTTrack is kind of the goldilocks option – not too simple, not too complex. Just right.

It’s got a graphical interface like SiteSucker, but with way more power and options like wget.

Installing HTTrack

Go to the official HTTrack website and download the Mac version. It’s free and open source.

Install it by dragging it to your Applications folder. You know the drill.

Creating Your First Project

Open HTTrack. It works with “projects” basically organized downloads with their own settings.

Click to create a new project. Give it a name like “ExampleSite” or whatever you want. Pick where you want to save everything.

Telling It What to Download

Next screen asks for the URL. Paste in the website address you want to download.

Cool thing about HTTrack you can add multiple URLs if you want to download from several sites at once. Pretty handy.

Customizing Your Download

This is where HTTrack shines. Click “Set Options” and you’ll see tons of choices.

Don’t get overwhelmed. Here are the important ones:

Download depth – how many levels deep it should follow links. 2 or 3 is usually good.

File types – pick what to include or exclude. Maybe you don’t want videos taking up space.

Speed limits – throttle the download so you’re not hogging all your bandwidth.

Most of the default settings work fine though. You can just leave them and be good to go.

Starting the Download

Click Next, then Finish, and HTTrack gets to work.

It shows you detailed progress – files downloaded, estimated time left, all that good stuff. Way more info than SiteSucker gives you.

Checking Out Your Downloaded Site

When it’s done, go to your project folder. HTTrack organizes everything nicely and even creates a shortcut that opens the site properly.

Click index.html and you’re browsing the site locally.

My Take on HTTrack

It’s my go-to when I need more control than SiteSucker but don’t want to mess with terminal commands.

The interface looks a bit old-school, not gonna lie. But it works really well.

Sometimes it struggles with super modern JavaScript frameworks, but for most sites, it’s fantastic.

Method 4: Safari’s Quick Save (For Single Pages)

Sometimes you don’t need the whole website. Maybe there’s just one article you want to keep, or a single reference page.

Safari’s got a built-in feature for exactly this.

How to Do It

Open the page you want in Safari.

Click File in the menu bar, then “Save As.”

In the Format dropdown, pick “Web Archive.”

This saves the complete page – text, images, formatting, everything – in one neat file.

When to Use This

I use this method all the time for quick saves. Found a great recipe? Save it. Awesome tutorial? Save it. Important reference page? You get the idea.

It’s fast, it’s built right into your Mac, and you don’t need any extra software.

The Catch

It only saves that one page. You’re not getting the whole site, just what you’re looking at.

If you click a link in the saved page, it’ll try to go to the internet. So it’s really just for saving individual pages you want to reference later.

Think of it like bookmarking, but better because you actually have the content saved.

If you like keeping things under control online just like when you stop distractions on your phone saving important pages offline gives you that same sense of calm and control.

Getting Your Downloaded Site to Actually Work Right

Here’s something nobody tells you upfront sometimes downloaded sites need a bit of help to work properly.

Simple HTML sites? They’ll open fine by just clicking the index file. But modern sites with fancy JavaScript and interactive features? They might need a local server.

The Python Trick (Easiest)

Your Mac already has Python installed. We can use it to run a simple server in like 10 seconds.

Open Terminal and navigate to your downloaded site folder:

cd ~/path/to/your/website/folderThen run:

python3 -m http.server 8000Open your browser and type http://localhost:8000 in the address bar.

That’s it. Your site is now running on a local server. Way more features will work this way.

Using MAMP (If You Need the Full Deal)

MAMP stands for Mac, Apache, MySQL, PHP. It’s basically a complete web server setup for your Mac.

Download MAMP from their site and install it. The free version works great.

Open MAMP and click “Start Servers.”

Copy your downloaded website folder into MAMP’s htdocs folder.

Go to http://localhost:8888/your-folder-name in your browser.

MAMP is overkill for simple static sites, but if you’re working with WordPress or anything PHP-based, you’ll need it.

The Node.js Option

If you have Node.js installed (lots of developers do), there’s a super simple package called http-server.

Install it:

npm install -g http-serverGo to your website folder and run:

http-serverDone. It tells you what URL to open.

I use this one a lot because it’s fast and lightweight.

When Things Go Wrong (And How to Fix Them)

Even when you do everything right, sometimes stuff breaks. Here’s what usually goes wrong and how to fix it.

Missing Images or Broken Styling

You open the site and it looks terrible – no images, weird formatting, just a mess.

This usually means the download didn’t grab all the resources. Maybe the server blocked some requests, or links were structured weird.

Try downloading again. Sometimes a second attempt catches what the first one missed. With wget, make sure you’re using that --page-requisites flag.

Links Don’t Work

You click a link and get “file not found” errors.

This happens when links weren’t converted to work locally. They’re still pointing to the online version.

In wget, use --convert-links. In SiteSucker, check that “Convert Links” is enabled. HTTrack usually handles this automatically, but double-check the settings.

JavaScript Features Are Broken

The site looks okay but interactive stuff doesn’t work – buttons don’t respond, animations are frozen, that kind of thing.

Modern sites often rely on external APIs or server-side processing. That stuff just won’t work offline.

Your best bet is running the site on a local server (like we covered above) instead of opening files directly. That helps, but some features still might not work. It’s just the nature of how modern websites are built.

Getting Blocked

The download starts but then stops with “access denied” or “403 forbidden” errors.

Some sites actively try to prevent downloading. They don’t want people copying their content.

With wget, add delays between requests using --wait=2 (waits 2 seconds between files). This makes you look more like a human browsing normally instead of a robot scraping content.

If a site really doesn’t want to be downloaded, respect that and move on.

The Download Never Ends

You started downloading hours ago and it’s still going. Will it ever finish?

Huge sites with thousands of pages take forever. Sometimes too long to be practical.

Set limits. In wget, use --level=2 to only go 2 levels deep into the site structure. In SiteSucker, adjust the depth settings. You don’t always need every single page.

I’ve hit all these problems at some point. They’re annoying but fixable with a bit of patience.

Smart Ways to Download Websites

After doing this for years, I’ve figured out some tricks that make everything smoother.

Start Small

Don’t jump straight into downloading a massive site. Test with something small first – a simple blog or portfolio site.

This lets you figure out the tool and catch problems early without wasting hours on a failed download.

Check the Size First

Try to get a sense of how big the site is before downloading. Small blog? Probably fine. News site with 10 years of archives? That’s gonna be huge.

You don’t want to realize you just downloaded 50GB after the fact.

Be Nice to Servers

Add delays between requests. Hammering a server with rapid-fire downloads is rude and might get you blocked.

Using --wait=1 with wget is polite and prevents you from overwhelming someone’s server.

Stay Organized

Create one folder for all your downloaded sites. Seriously, this saves so much headache later.

I have a “Downloaded Sites” folder in my Documents. When I need something, I know exactly where to look.

Write Down What You Did

If you used special settings or commands, jot them down. Maybe in a text file in the same folder.

When you need to update the site in six months, you’ll thank yourself for remembering what worked.

Test Right Away

Don’t wait to check if the download worked. Open it immediately and click around.

Way easier to fix problems while it’s fresh in your mind than coming back weeks later.

Clean Up Regularly

Downloaded sites pile up fast. Every few months, I go through and delete ones I don’t need anymore.

Keeps your storage from getting clogged and makes it easier to find what you actually want.

Just like when you block extensions to keep things running smoothly, being careful with downloads helps your storage stay neat and organized.

Questions People Always Ask

Can you really download an entire website on Mac?

Yep, absolutely. It’s easier than most people think. Tools like SiteSucker, wget, and HTTrack handle the whole process. You paste a URL, click download, and wait. The hard part is just picking which tool to use.

What’s easiest for someone who’s never done this?

SiteSucker, hands down. It’s made specifically for Mac and the interface is super straightforward. You don’t need to know anything about coding or the terminal. Just install it, paste a URL, and you’re good to go.

Will I get in trouble for downloading websites?

Not if you’re doing it for yourself. Personal use, learning, backup – all totally fine. Where you’d get in trouble is redistributing the content, selling it, or passing it off as your own work. Just use common sense and respect copyright.

How do I get wget working on my Mac?

First install Homebrew, then use it to install wget. Takes about 10 minutes total. I walked through the exact steps earlier in this guide – just copy and paste the commands I gave you.

What about sites that are super JavaScript-heavy?

They’re trickier. You can download the files, but some features won’t work offline because they need server connections or external APIs. Your best shot is running the site on a local server, but even then, some stuff just won’t function without internet.

How much space am I gonna need?

Depends wildly on the site. A basic blog might be 50MB. A site packed with images and videos could be several gigabytes. Try to estimate before downloading so you don’t fill up your hard drive.

Will everything work offline?

Static content – HTML, CSS, images – works great offline. Things that need internet connections won’t work. Comments, ads, embedded YouTube videos, live data – that stuff needs the internet. But most of the actual content will be fine.

Can I update the site later if it changes?

Sure. Just download it again. Most tools will update the files that changed and add new ones. Some might re-download everything, which takes longer but ensures you have the latest version.

Wrapping This Up

So there you have it. Four different ways to download websites to your Mac.

SiteSucker if you want dead simple. Wget if you like having total control. HTTrack if you want something in between. Safari’s Save As if you just need one page.

I use all of them depending on what I’m trying to do. There’s no “best” method – just different tools for different situations.

The important thing is respecting the websites you’re downloading. Don’t steal people’s work. Don’t republish content that isn’t yours. Use downloads for learning, reference, and personal backup.

Now you know how to do this. Pick the method that feels right for you and give it a shot.

What’s the first site you’re going to download? Or if you’ve tried this already, how’d it go? I’d love to hear about it in the comments.

Happy downloading!